-

Posts

7,835 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Everything posted by kye

-

Cool. What are the benefits of ToF vs PDAF? @BTM_Pix would know 🙂

-

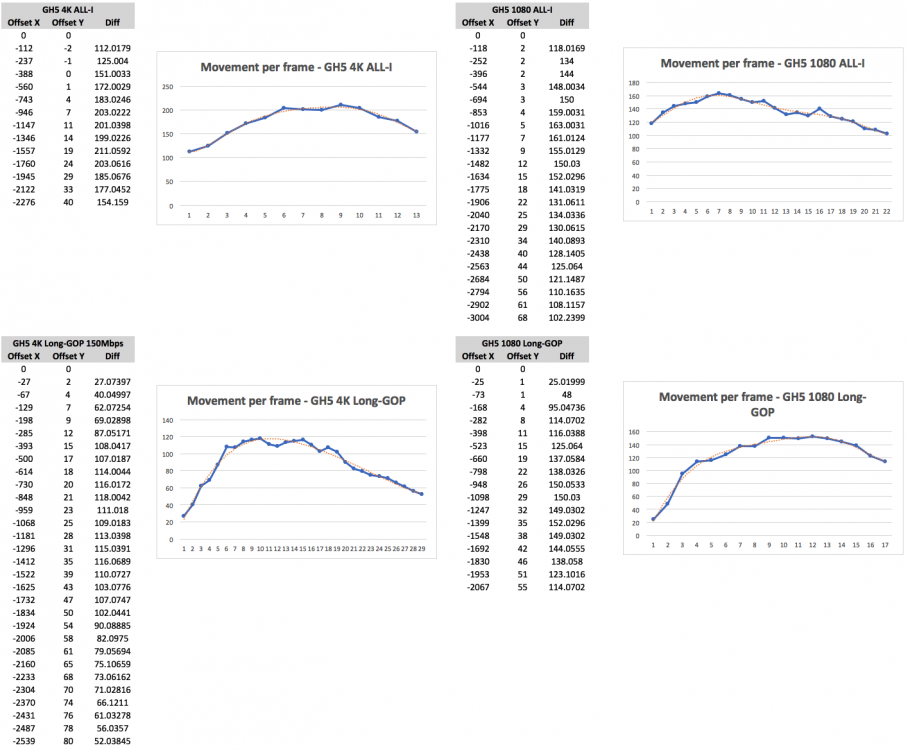

OK, here's all four combinations. The blue line is the movement per frame. The orange line is a trend line (6th order polynomial) to compare the data to and see if the data goes above then below then above which would indicate jitter. Looking at it, there is some evidence that all of them have some jitter, possibly ringing from the IBIS. I got more enthusiastic with my pans in the latter tests so there's less data points, so they're not really comparable directly, but should give some idea. They appear to be similar to me, in terms of the up/down being about the same percentage-wise as the motion.

-

I just made this clip in Resolve using a few keyframes. Not only will it have zero jitter, but it will also have no RS. Use it as a test for playback jitter. https://www.sugarsync.com/pf/D8480669_08693060_8819366

-

APOLOGIES ALL. The test above was GH5 4K 150Mbps h264, not the 5K mode. I just shot a few other tests in other modes and came back in Resolve to look at the files and saw 3840x2160 next to the file I used for the above. Good news is that I now have 1080p ALL-I, 1080p Long-GOP, and 4K ALL-I clips to analyse, so we'll see if there are differences.

-

I'm good at the technical, not so much with the creative. If you can, please download the clip and see if you can see the jitter. What is visible is something I need other people to help with. If there's enough appetite (especially amongst new camera fever season) then I might even generate some A/B tests and see what levels of jitter are visible.

-

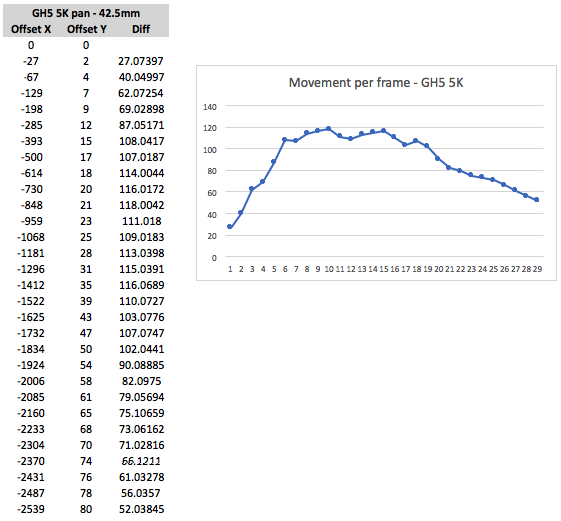

Here's the original 5K h265 file that I analysed above: https://we.tl/t-YgQIWv1Sps The frames I analysed above were the first pan from left-to-right. Let me know what you see if you watch it. Link expires in 7 days, so don't delay! Limited time offer!! *ahem*

-

I've put the GH5 to the test. Setup was GH5 in 5K h265 mode (to stress the camera and get the most resolution), Voigtlander 42.5mm 0.95 lens focused at f0.95 then stopped down a couple of stops to sharpen up. This produced shutter speeds in the 1/10,000s range and shorter. First test was to pan and track a stationary object, in this case the corner of a bolt in the fence which was a sharp edge with high contrast. I put a tiny box around it, I think it was about 3-4 pixels wide/tall, and tracked it on a 4K timeline at 300% zoom: and here's the results: Observations: Test produced good data, with images being sharp even in mid-pan and margin of error was small (only a couple of pixels) compared to large offsets (60-120 pixels) Test was hand-held and between myself and the IBIS we did a spectacular job (I think it was all me, but... 😉 ) As offset was both horizontal and vertical I reached deep into my high-school geometry to calculate the diagonal offset using both dimensions There is evidence of 'ringing' in the movement shown in the fluctuations between ~110 and 120 This ringing may well come from the IBIS mechanism, as ringing is a side-effect of high-frequency feedback loops (of which IBIS is a classic example) Discussion: This 10px P-P of jitter is there in the footage, so the question is if it would be visible under more normal circumstances. Let's start with motion blur. If I had shot this with a 180 degree shutter then the blur would be approx 60px long, making the jitter a 16% variation of the blur distance, which is small but isn't nothing. Also, if the shutter operated like a square wave with each pixel going from not being exposed to being fully exposed instantaneously then the edges of the blur would be sharp, although much lower in contrast. What about timing? 10px out of 115 pixels is 8.7%, which if this jitter came from the timing of the frames rather than the direction of the camera then it represents a change of about 3.6ms when compared to 24p which has a 41.66ms cadence, so at a given frame it might be ahead by 1.8ms and two frames later be behind by 1.8ms. Would this be visible? I don't know.

-

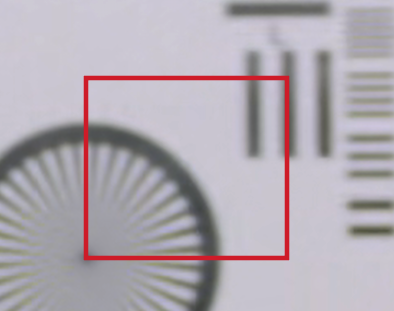

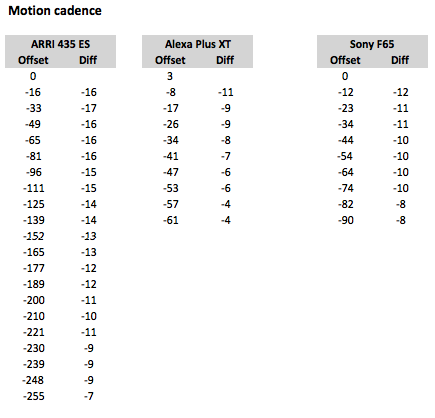

OK, analysis of this first video: (thanks to Valvula Films for sharing this footage). In a sense, this video isn't well suited to an objective jitter test as the focus is pulled during the pan so everything is blurred for most of the pan. Regardless, the testing methodology was to create an overlay box and offset the underlying video, frame by frame, to the overlay box, and record the offset. Like this: I compared the pan that went to the chart vs the pan away from the chart and chose the one with the greater number of frames. For each camera I chose a range of frames between when the test chart became too blurred, and when the movement became too small for single pixel measurements. Where there wasn't a perfect whole-pixel offset I chose the closest one. Where the offset to the left seemed identical to the one on the right I chose the one on the right. Here are the results: The first column is the offset of each frame, and the second is the movement between this frame and the last. The pan was accelerating / decelerating so the speed went up/down. My impressions of this are: The numbers don't show any jitter My impression of which frames were bang-on vs somewhere in-between didn't seem to indicate that there are any big nasties not shown in this data These are all high-end cameras so it is feasible that we didn't find any jitter because there isn't any to find I could have gone much more in-depth and tried to offset by fractions of a pixel (Resolve will do this) but on a 1080p image any jitter less than a single pixel is probably invisible What I learned: High-end cameras probably don't have much jitter (not really surprising, but let's start from a known position) In a test like this, blurring things isn't a good idea, either from a focus pull or from motion blur, and the more frames something moves the more precise a test would be A better test would be to shoot where exposure time is very short, there are fine details to track - both from a lens focus perspective as well as simply having details only a few pixels wide

-

Love that video by Ed David - the commentary was hilarious! Nice to see humility and honesty at the forefront. So far, my thoughts are that there might be these components to motion cadence: variation in timing between exposures (ie, every frame in 25p should be 40ms apart, but maybe there are variations above and below) - technical phrase for this is "jitter" rolling shutter, where if an object moves up and down within the frame it would appear to be going slower / faster than other objects Things that can impact that appear to be a laundry-list of factors, but seem to fall into one of three categories: technical aspects that bake-in un-even movement into the files during capture technical aspects that can add un-even movement during playback perceptual factors that may make the baked-in issues and playback issues more or less visible upon viewing footage My issue appears to be that the playback issues are obscuring the issues added at capture. As much as I'd love to do an exhaustive analysis of this stuff, realistically this is a selfish exercise for me, as I want to 1) learn what this is and how to see it, and 2) test my cameras and learn how to use them better. If I work out that I can't see it, or it's too difficult to make my tech behave then I likely won't care about the other stuff because I can't see it 🙂 First steps are to analyse what jitter might be included in various footage, and to have a play with my hardware.

-

Both. Tried VLC and Quicktime on the file. I even tried to load it into Resolve to convert it to a different format but Resolve doesn't stoop to such lowly formats as mp4 🙂

-

Thanks @BTM_Pix - I'll keep an eye out for unicorns on ebay. I think I've hit my first issue. I've watched the video they linked and I see judder in all of them. First attempt was my MBP running my 4K display with internal GPU, unplugging the external display my second attempt was MBP running only the laptop screen, third was plugging in my eGPU with RX 470. All attempts displayed significant judder, both when playing the video fullscreen as well as at a 100% window (720p). I guess if your computer sucks at displaying it, then not much point experimenting with capture. Any tips for improving things at my end?

-

I have a few cameras that reportedly vary with how well they handle motion cadence. I say reportedly because it's not something I have learned to see, so I don't know what I'm looking for or what to pay attention to. I'm planning to do some side-by-side tests to compare motion cadence - can you please tell me: 1) what to film that will highlight the good (and bad) motion cadence of the various cameras, and 2) what to look for in the results that will allow me to 'spot' the differences between good and bad. Thanks. I'm happy to share the results when I do the test. I'll also be testing out if there's some way to improve the motion cadence of the bad cameras, and what things like the Motion Blur effect in Resolve does to motion cadence.

-

We're agreeing. The article you linked says that "Clear Supermist is made of fine clear colorless particulate that is bonded between two pieces of Schott B270 Optical Glass which exceeds 4K resolution capability." and "Black Supermist is made of fine black color particulate that is bonded between two pieces of Schott B270 Optical Glass which exceeds 4K resolution capability." Particulate refers to something being a bunch of particles. ie, it's tiny lumps of stuff - it's not a continuous smooth layer of something. The optical effect appears to be that when you have a bunch of stuff (lumps, bumps, patterns, whatever) very near or inside the lens, then that texture somehow becomes an in-focus mask on out-of-focus areas. This is why the texture of the meshes that I linked in the article I shared also showed up, and these were attached between the camera and the lens. You can also use this effect for special effects: Once again, this is the shape of something close to or inside the lens creating an in-focus mask on the out-fo-focus areas. Tiffen are just using a mask made of tiny particles that have optical diffraction effects rather than just casting a silhouette, although it's the same optical principle that makes the texture appear in-focus.

-

My understanding is that the speckled patterns in bokeh are to do with any textures in / on the lens. You get them if you use a mesh for diffusion: https://www.provideocoalition.com/the-secret-life-of-behind-the-lens-nets/ I also thought that it can be if you've got dirt or something inside the lens or on the front filters?

-

I bought the Studio edition of Resolve and logged a support question some time back, and they directed it to a support partner of some kind here in Australia (I think they were a dealer or something - they had a different business name), who then emailed me back and forth to diagnose and help me out. I was trying to work out how to optimise my MBP + eGPU setup and at one point they checked with a BM engineer about something. It was a very positive experience overall, and I was kind of surprised because I'm used to being a customer of other brands and basically you get their forums or it's impossible to even find a phone number for them, so customer service isn't something I'm used to even thinking is available, let alone getting help from really knowledgable folks.

-

More spectacular footage from the Laowa probe lens.. and here's the BTS: I watch James Hoffman because I'm interested in coffee, so this is a completely unexpected foray into camera tech and slow-motion loveliness, but if you like coffee then I can also recommend his channel. He's a Barista World Champion, has a ridiculously nerdy interest in coffee, and is hilarious when he tries coffee products that taste terrible or reviews cheap and bad coffee equipment. You're welcome!

-

Think about it like this. FCPX, PP, Resolve, and other programs, will all exist in some way over the next decade. In all likelihood they will have new versions, new features, new bugs, various frustrations and limitations, and various performance levels doing different tasks on various hardware platforms. Over the course of the next decade, which do you feel would be the best total experience for you? ie, experiencing the ups and downs of PP for that whole time, going with an alternative for that whole time, or sticking with PP now but transitioning at some point in the future. Think about the hardware required, the whole package. If you feel you'd be better off with another package, then think about the best time to switch. Over the next decade you'll buy multiple new computers, cameras, and other equipment, so think about the best time to switch. Factoring in the next decade, switching platforms isn't such a big deal, especially if the grass is actually greener on the other side - you'd be eating better grass long after the scrapes from climbing the barb-wire fence have healed and long been forgotten. Changing software packages isn't a chore, it's an investment. You invest time, energy, money for the software, maybe new hardware even, but you would do so if the return was worth it over the longer-term. Think about the cost of your frustration. How it impacts your quality of life and creativity. How demotivating it is. If it's worth making that investment, then choose when is the best time. In the middle of a project with a deadline isn't that best time, maybe there are other factors to consider too. but if it's worth switching then schedule it in your life, then do it.

-

I've only had one Sigma lens, the 18-35/1.8 but it was a great lens. I'd buy Sigma again in a heartbeat.

-

Great stuff. +1 for GH6 info. Also, more of a comment than a question, but could they please consider adding video modes in wider aspect ratios, eg, 2:1, 2:35:1, 2:66:1 etc. I know you can film 16:9 and crop in post but it wastes bitrate and storage. They currently have guides on the GH5 for these but they're so faint they're very difficult to use.

-

In addition to the above questions, and considering your budget restrictions, I'd suggest you think about the complete setup of camera/lens/accessories which you will need. The GH5 would have the advantage of taking your MFT lenses, but if you sell the Blackmagic then maybe you can sell the MFT lenses and use that money towards the new setup. There is no perfect camera, so the best way to go for you will be to align the strengths and weaknesses of what you get with the priorities / preferences / situations that you shoot in.

-

Which microphone\recorder setup to use for hidden camera type of recording?

kye replied to Amazeballs's topic in Cameras

Perhaps slightly off-topic but I once saw a BTS doco of how they filmed Big Brother (I think it was BB Australia) and although it was before I got into video, I remember it being really interesting especially about recording sound and isolating various areas so specific conversations could be heard clearly. IIRC the spa was also a challenge to get clean audio out of. That was a really long time ago, but if you can find it, that might be useful / interesting to watch. -

For the high-end ones, are they recording to Prores HQ? or 4444? or XQ? As a side-note, this table is very handy: https://blog.frame.io/2017/02/13/50-intermediate-codecs-compared/

-

Canon 9th July "Reimagine" event for EOS R5 and R6 unveiling

kye replied to Andrew Reid's topic in Cameras

Haha, insert comment about pocket size here. I agree about really having to mean it. In the past I've contemplated trips to safari in Africa and also to visit Antarctica and in both of those situations I'd consider taking a long tele lens with me, and when I was contemplating Antarctica I even contemplated hiring some equipment, a decent tele lens anyway, as once you're beyond the EF 100-300 you're pretty firmly in rental territory for most people. I've done interstate trips to visit family and taken my 70-210/4 but only because I knew we were going to a zoo and I wanted some close-up footage of the animals, just to try it out and see what I can get. You're right that it might sell well despite not really hitting the mark in a logical sense, I guess that's economics, and consumerism. Maybe the weekend warriors who photograph sports might like it, but I still can't get past why you wouldn't just put a TC on a shorter lens. -

Probably a wise investment. Cheap NDs are the enemy of good colour. I should also say, happy to share some settings or whatever. I can't remember if I did in the original thread. Or post some stills you have and some inspiration stills and we can all have a go seeing if we can match them. That's fun to play around with 🙂

-

Just finished a first pass on footage from a trip late 2018. Over 18 days I shot 3024 clips totalling 5h37m, but somehow after I've gone and pulled my selects into a timeline I for a minute there I thought I still had 1984 clips totalling 2h35m! Using the Source Tape view in The Cut page of Resolve it looks like I was half way through when I marked an in point but didn't mark an out point and then did an Append to End of Timeline and so I appended every clip from my in point to the end of my footage to my timeline. Oops! After fixing that little surprise, I now have 839 clips totalling 41m - much better!! I was thinking how many of the really cool shots I was going to have to cut and getting quite sad about it! I must say that I am really enjoying the new Cut page in Resolve. Especially the Source Tape view (despite the above snafu) as you can just use the J-K-L keys across all of your footage without having to manually go to the next clip. Combined with I and O and then P to append the range to the timeline I can edit with one hand and have a drink in the other 🙂