-

Posts

8,174 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Everything posted by kye

-

Funny.. when I read "Can't see why a rental house would be interested" I interpret that as saying that 'not a single rental house would be interested'. I don't hear 'some would be interested but not enough to make it commercially viable'. It's like saying 'I can't see why a person would be interested in marrying Jack' but meaning 'only a few dozen people would be interested in marrying Jack'. Poor Jack - he'll be single forever unless dozens of people fall madly in love with him!

-

Given the track-record of Kodaks business decisions throughout the transition from film to digital, I wouldn't rule anything out in terms of what they might be thinking / hoping for. I would, however, be confident in saying that making it primarily for rental houses would likely be a commercial mistake 🙂

-

Just for interest, I went back to the original posts. I've added my own emphasis. Clark said this: You replied with this: and I replied with this: It is a strange thing how discussions can often veer significantly from what was being discussed originally, and no-one bothers to go back a page or two and see what was actually said.

-

So, we agree that there might be one unit in a rental house somewhere? That was literally my only point... somehow you've managed to change my argument and then argue against me 🙂

-

This thread inspired a different one: I think that what I described in that thread is one of the underlying factors in this discussion.

-

I think one of the main sources of confusion and disagreement is that creativity and technology used to be aligned, but are now diverging, and it's confusing a lot of people. What I am talking about Take resolution as an example: First, film and lenses were low resolution, and everyone wanted them to be higher Then, digital was low resolution and everyone wanted it to be higher At some point, somewhere between 2K and 6K, the resolution of sensors and lenses exceeded the human visual system This meant that the people who wanted to create for the human eye and the people who loved tech were aligned up until that threshold was reached, and now the perceptual people are saying "enough already - we've reached the goal - put your development efforts into something that matters" and the tech people are saying "more is more - I thought you agreed with us - why would you choose this inferior thing instead of the latest and best thing???" It's the same with frame rates, as we have seen in a very civil and productive discussion in another thread.... 😂😂😂 Dynamic range is approaching this point, with some saying there is enough and the discussion starting to move from "everyone needs more" to "sometimes I need more" and will eventually be "we're done - except for specific and rare situations". Bitrates and codec quality are getting into this territory, on the capture side at least, and streaming bitrates will get there in years to come as internet bandwidth inevitably increases exponentially. There are many other examples... Some predictions I suspect the discussion will further segment into the "virtual realism" industry and the "creative storytelling" industry. The "creative storytelling" industry will continue in the directions that cinema and TV have been developing in for the last century. This will be 2D image capture and playback, different lenses used, heavy editing with 2-10s per shot, sound design in post, potentially heavy VFX, focus on creating an emotional journey for the viewer. The "virtual realism" industry will develop from its current infancy. It will be for immersing yourself into situations that you wish to experience but don't have access to, or wish to experience multiple times. This will likely be things like music concerts, cultural experiences like festivals, events like watching the Pope speak at the Vatican or attending an F1 or Nascar race or space shuttle launch, personal recordings like your child's school play or dance recital, scenes from nature like watching the sun rise in Death Valley, and (of course) the adult entertainment industry. This will be 3D VR, minimal editing with very long immersive shots (maybe even just a single shot for a concert etc), sound captured almost exclusively on location or very naturalistic sound design in post, and a focus of creating an experience that matches reality in as many ways as possible. This technology will likely also extend into capturing more senses too like smells, air movement, temperature, etc. The sibling of the "virtual realism" industry will be the "virtual worlds" industry, which will be the same as above, but with everything computer generated. This will be things like gaming, interactive experiences, etc. It will rely on mostly the same viewing technology, but augmented with things like force-feedback / touch controls, and of course a massively powerful computer generating the virtual world. I also predict the virtual realism folks will step into discussions that the creative storytelling folks will be having, and the worlds will collide, mis-communication will dominate, egos will be bruised, etc. This already happens when the documentary folks start talking to narrative folks and things like camera size and low-light performance etc are hotly debated by people with vastly different requirements and priorities.

-

Here's a video that explains the basics of lens choice: Perhaps the single biggest take-away from this video is how the cinematographer is speaking - he is talking about how he wants the audience to feel, not what is 'realistic'. In fact he introduces the video by saying "Hello. I'm Tom Single and I've been a cinematographer for the past 40 years. Today I'm going to be focusing on how film-makers achieve the desired mood as it relates to lens choices". Think about that... "the desired mood". Realism isn't the goal, and it's not even relevant to the context. It's completely besides the point for the industry that he's in. You can take almost any aspect of film-making and when you find very experienced people talking about it, it will always be discussed in the context of the mood and perceptual associations you want to create.

-

Nice work! I like the colour grading and overall image processing, the texture is nice too. The long shutter on the movement is cool, and getting the right level of shake to represent the experience of riding a motorcycle on modern streets was a nice touch. 🙂

-

I was suggesting that there might be one. I thought you were saying there wouldn't be any. I wouldn't imagine they would be common, but I did think there would be sufficient demand in the market for the most film-centric rental house in the middle of Hollywood to have at least a single unit.

-

I would imagine there would be a niche larger than you might think. I think the revival of 35mm still film is a reasonable parallel - it is much easier to shoot digital and emulate it in post with one of the many excellent plugins available. But people like shooting film because it's somehow "authentic". Noam Kroll shoots a lot on film, as I am sure you're aware, shooting a number of short films on it, and shot this ad on super 8mm: https://noamkroll.com/shooting-super-8mm-red-gemini-for-banana-republic-in-joshua-tree/

-

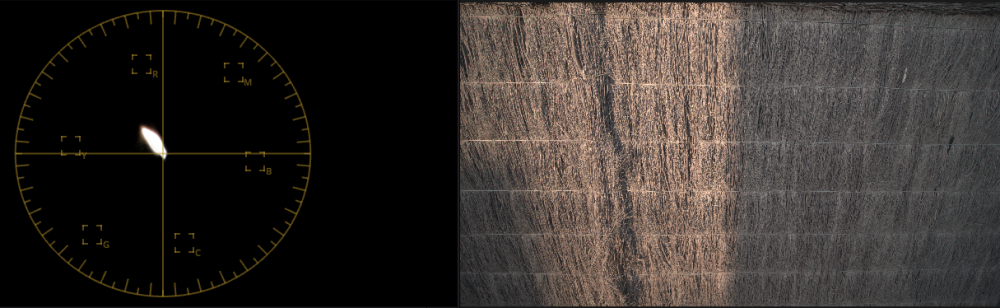

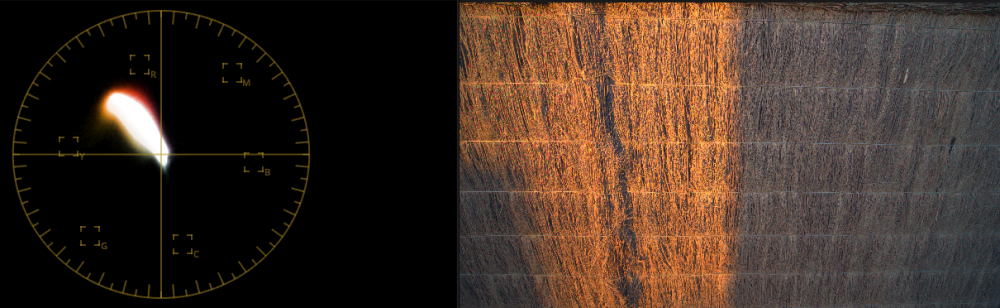

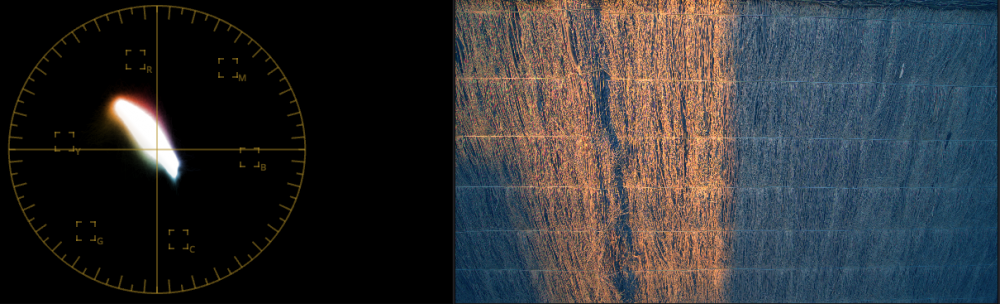

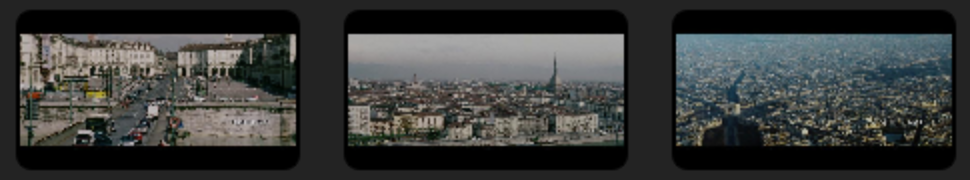

Actually, the Orange and Teal look is copied from reality (but sometimes dramatically overdone). Any time the sun is shining and the sky is blue then objects that are lit directly from the sun will appear one colour and objects in the shade will be lit solely from reflected light, which a significant amount will have come from the blue sky, so shadows are more blue than things lit by the sun, and in comparison, things lit by the sun are more orange (the opposite of the colour of the sky) than the shadows. This is a subtle effect, but is observable. I did the test myself. Here's a RAW photo of my fence at sunset: If we radically juice up the saturation, then we get this: and if we shift the white-balance cooler, then we get this: So, although reality doesn't look anything like how strong this colour grade is, the orange/teal look is part of reality, not a fictional thing that's made up. Also, a great many movie colour grades don't have the orange and teal look. Here are a bunch of movie stills from Blockbusters: (you have to click and expand the image to view it large enough) Many of them are almost one hue, with almost zero colour separation: But, once again, since you missed the point I was trying to make... with all the equipment and talent these movies have at their disposal, why on earth would they look like this if they were trying to make them look realistic? You've got it all wrong - it's the other way around. People who make movies want to make things a certain way, and shooting 24p is one of the (dozens / hundreds) of ways they accomplish this. I'm not sure what the point is that you're trying to make? Genuinely? If it was simply that movies were in 24p but were trying to be as real as possible in every other way, then yes, you could make the argument that it was a legacy choice, but there is practically no aspect of movie-making that is trying to imitate reality. I think you're exactly right. If these movies were 'realistic' then they would look like small reality shows. The evolution of film-making started by recording theatre productions. There were no cuts, it was like you were sitting in the crowd watching a play. They didn't think they could edit because real-life doesn't suddenly jump to a new location. When they worked out that cutting was fine and the human mind didn't get disoriented if you did it, they still thought that the mind wouldn't understand if there were jumps in time, so they had continuity editing, which meant that if someone entered the room then you'd have the whole sequence of them opening the door, walking through it, closing it behind them, then walking across the room, and only then starting to speak to the person inside. Turns out we're completely fine with cutting most of that out - in dreams we experience time jumps and the theory is that we're fine with time jumps because dreams do it. The history of film-making is a journey from one-shot films that made you feel like you were at a theatre production, and have gradually evolved into Bayhem, Momento, Interstellar, etc. If anyone wanted realism then they've been walking in the wrong direction for an entire century now. Either the entire history of cinema was done by people who are completely incompetent, or, they're aiming at something different than you are.

-

Everyone is complaining about their EVF and LCD screens, but she's not!

-

The whole idea of movie stars when I was growing up was that they were "larger than life". I think, once you start looking, you'll find that practically nothing about cinema is even remotely realistic / real-looking. Look at the visual design / colour grading for a start.... I mean, these projects all had the budget, had highly skilled people, and had every opportunity to make things look lifelike, but none of these things look remotely like reality. Even the camera angles and compositions and focal lengths - none of these make me feel like I'm looking at reality or "I am there". Bottom line: studios want to make money, creative people want to make "art", neither of these are better if things look like reality. I'm in reality every moment of every day, why would I want movies to look the same way? It's called "escapism" not "teleport me to somewhere else that also looks like real-life... ism".

-

He does. I applaud Markus for the work he does and the passion that he brings, trying to beat back the horde of Youtube-Bros who promote camera worship and the followers who segue this into the idea of camera specs above all else. But there's a progression that occurs: At first, people see great work and the cool tools and assume that the tools make the great work - TOOLS ARE EVERYTHING Then, people get some good tools and the work doesn't magically get better. They are disillusioned - TOOLS DON'T MATTER Then they develop their skills, hone their craft, and gradually understand that both matter, and that the picture is a nuanced one. TOOLS DON'T MATTER (BUT STILL DO) This is the same for specs - they are everything, they are nothing, then they matter a bit but aren't everything. By the time you get to the third phase, you start to see a few things: Some things matter a LOT, but only in some situations, and don't matter at all in others Some things matter a bit, in most situations Some things matter a lot to some people, but less to others, depending on their taste Film-making is an enormously subtle art. Try replicating a particular look from a specific film/show/scene and you'll find that getting the major things right will get you part of the way, but to close the gap you will need to work on dozens of things, hundreds maybe. The purpose of any finished work is to communicate something to the audience. For this, the aesthetic always matters. Even if the content is purely to communicate information, if you shoot a college-looking-bro delivering the lines sitting on a couch drinking a brew filmed with a phone from someone lying on the floor, well, it's not going to seem like reliable or trustworthy information, unless it's about how many beers were had at the party last night (and even then...). The same exact words delivered by someone in a suit sitting at a desk with a longer lens on a tripod and nice lighting will usually elicit a very different response (sometimes one of trust, and sometimes a reaction of mis-trust, but different all the same). A person wearing glasses and a lab coat standing in front of science-ish stuff in a lab is also different. Humans are emotional animals, and we feel first and think second. There isn't any form of video content that isn't impacted by the aesthetic choices made in the production of the video. Some might be so small that they don't seem relevant, but they'll still be there in the mix.

-

Even if budget was no option, sadly it's the GX85 for me, and that's a very compromised option in almost every way, but is the right balance of trade-offs. In a way I'm lucky that my best camera isn't ridiculously expensive, but also, it means there is nothing I can do to work towards a better setup.

-

...beard flowing down majestically into the unbridgeable abyss...

-

Maybe some niche rental house in LA? Even just if folks making music videos wanted to rent it?

-

Time will tell if it's just familiarity or if it's actually something innate. I wouldn't be so quick to assume that the human visual system has nothing to do with how we feel about what we see, and that it's all equal and is just what we're used to.

-

I'd be curious to see proper research on the topic. My predictions would be that a certain percentage would identify that it "looks different" in some way, and would be able to identify the effect in an A/B/X style test. I suspect that a further percentage would say it looks the same, but that it somehow feels different, essentially anthropomorphising it to be sadder or more surreal or something. I've done this test with a few people in a controlled environment, where I recorded a tree in my backyard moving in the light breeze with my phone in 24p, 30p and 60p, and then put them all onto dedicated timelines in Resolve, then by playing back each one through the UltraStudio 3G it would switch the monitor to the correct frame rate for each one. It wasn't perfect, but all the clips were recorded with very very similar settings, so it wasn't a bad test. Perhaps the best test would be to render a 3D environment in the three frame rates but have each render start with the same random variables and so the motion of the scene would be exactly the same. Of course, if you were going to do it, I'd put in a few other things too, like varying the shutter angle. It would be a huge amount of work and would require a pretty large sample group to get meaningful results. Good question. I've noticed the gulf widening between "video should look like reality so technology advancement is awesome" and "video should be the highest quality to democratise high-end cinema and advance the state-of-the-art". There are also the "technology is always good, why are you talking about a story?" folks, but they're best ignored 🙂 The challenge in this debate is that if we're not even trying to achieve the same goals, then what's the point of discussing the tools? Interesting concept! I think you might be overstating the take-over of the heavy-VFX component of the industry, and even perhaps the nature of the segments themselves. Certainly a majority of Hollywood income might be from VFX blockbusters, but the world is a lot larger than Hollywood. The majority of films made likely weren't VFX-heavy, and the majority of film that people actually cared about definitely wouldn't have been. If you asked me if I'd seen <insert blockbuster here> then I probably couldn't answer, because truthfully they're mostly forgettable. On many occasions I've been pressured into watching a movie with my family that one of my kids chose, and the experience was mostly the same - famous actors / bursts of action / regular laughs / the USA wins in the end, and then a few days later I remember that I watched the film but genuinely couldn't remember the plot. This is counter to something like Roma where years later I remember some aspects but I also remember how it felt in critical moments and how my life is very different to theirs.

-

Computer displays are a long way from being superior to human vision, so it's all about compromises and the various aesthetics of each choice. I would encourage you to learn more about how human vision works, it can be very helpful when developing an aesthetic. A few things that might be of interest: Here's a research paper outlining that the human eye can perceive flicker at 500Hz: https://pubmed.ncbi.nlm.nih.gov/25644611/ Here's a research paper saying that people could see and interpret an image from a frame shown for 13ms, which is one frame in 77fps video: https://dspace.mit.edu/bitstream/handle/1721.1/107157/13414_2013_605_ReferencePDF.pdf;jsessionid=6850F7A807AB7EEEFA83FFEEE3ACCAEF?sequence=1 The human eye sees continually without "frames" and has continual motion-blur, normal video also has these with the motion blur represented as a proportion of the frame rate (where 360 degrees is 100%) but the human eye has a much faster frame rate than motion blur, so we might (for example) have a "shutter-angle" of dozens or hundreds of frames Video is an area where technology is improving rapidly and a lot of the time the newer things are better, but that's not always the case. The other thing to keep in mind is that there are different goals - some people want to create something that looks lifelike but other people want to create things that don't look real. Much of the tools and techniques in cinema and high-end TV production are to deliberately make things not look real, but to look surreal or 'larger than life' etc. I've been doing video and high-end audio for quite some time and have put many folks in front of high-end systems or shown people controlled tests of things back-to-back, and often people do notice differences but don't talk about them because they don't know what you're asking, or don't have the language to describe what they're seeing or hearing and don't want to sound dumb, or simply don't care and don't want to get into some long discussion. Asking people who have just seen a movie for the first time if they noticed "anything different" is a very strange approach - if they hadn't seen the film then everything about the film would have been different. Literally thousands of things - the costumes, the lighting, the seats, how loud it was, how this cinema smelled compared to the last one, etc. Better would be to sit people in front of a controlled test and show them two images with as few variables changed as possible. Even then it can be challenging. When I first started out I couldn't tell the difference between 24p and 60p, now I hate the way 60p looks and quite dislike 30p as well. Lots of people also knew what the 'soap opera effect' is, without being camera nerds..

-

I'm really interested in how long the longest end needs to be. You've basically said that 70mm is a bit short, that 75 or 80 are better, but that you use a 35-150mm outdoors. How do you feel about the 100-150mm range? Do you use it a lot? If so, what specific types of compositions and situations do you use it for? How do you feel about the 150-200mm range? Gathering from the above and other posts, you seem to have traded it in for other considerations, but is the focal length useful at all? Or is it too long for what you shoot? If you got given a weightless 28-200mm F2.8 lens then how many of your compositions would be above 150mm, and what would they be? What about 28-300mm? I guess what I'm looking for is feedback on the creative elements like that anything above 150mm is too compressed, or that it's only useful in certain situations, or that it feels too distant and out-of-context in an edit, or that it's not useful at all, etc.

-

I'm keen to get some feedback on focal lengths. As many know, I shoot travel and want to be able to work super-quickly to get environmental shots / environmental portraits / macro / detail shots, and have narrowed down to three options: GX85 + 12-35mm F2.8 GX85 + 12-60mm F2.8-4.0 GX85 + 14-140mm F3.5-5.6 The GX85 also has the 2x digital punch-in which is quite usable. GX85 + 12-35mm F2.8 This is essentially a 24-70mm (48-140mm) with constant aperture, and is the best for low-light. The question is if this is long enough for getting all the portrait shots. GX85 + 12-60mm F2.8-4.0 Same as above but slower and longer. I'm still not sure if this is long enough. GX85 + 14-140mm F3.5-5.6 Slower but way longer. This would be great for everything, and also zoos / safaris too, but isn't as wide at the wide end, which is only a slight difference but is still unfortunate. I'm keen to hear what people's thoughts are in terms of the practical implications of these options in terms of what final shots these will provide to the edit. My experience has been that the more variety of shots you can get when working a scene the better the final edit. I'm not that bothered about the relative DoF considerations, but aperture matters for low-light of course. I'm shooting auto-SS so am not fiddling with NDs, so the constant aperture doesn't matter in this regard. There are obvious parallels to shooting events, weddings, sports, and other genres - keen to hear from @BTM_Pix @MrSMW and others who shoot in similar available-light / uncontrolled / run-n-gun / guerrilla situations.

-

When I made the move from Windows to Mac, the primary reason I did that was I wanted to use a computer, but not have a part time job as a systems administrator, which is what Windows forces you to do in order to just use the computer. I was stunned at the time how much time I used to have to spend on the computer not doing the things I wanted to do, but doing technical things to enable those things to be done. TBH I'm over that, so while I'm perfectly capable of managing the IT of a medium sized business, I'd rather just use the computer for what I want to use it for, and not have to troubleshoot an array of file and network management infrastructure.

-

Panasonic S5 II (What does Panasonic have up their sleeve?)

kye replied to newfoundmass's topic in Cameras

There were horses and monkeys, so maybe sports something? I recognised very few people there, so maybe it's the MFT YouTubers rather than the Sony FF fan club? -

Yes, I understand the logic. One element of colour science often forgotten is the choice of RGB filters for the filter array, but I'm not sure if that would also be the same between these cameras, or if they'd just be supplied by Sony and therefore be identical between brands? In theory this would create differences due to the corners of the gamut, but once transformed to XYZ differences between two different filters on the same sensor would be rounding errors at best. The best discussion I've seen on digital processing is the discussion of the Alexa 35 - page 52 onwards. https://www.fdtimes.com/pdfs/free/115FDTimes-June2022-2.04-150.pdf As you say, much of what is going on might well be things that occur on the sensor. The other component that is worth looking at between RAW sources is the de-bayer algorithms between cameras. AFAIK you can't choose which de-bayer algorithm gets used on RAW footage, so there might be differences between the manufacturers algorithms contributing to the differences we see in real life.