KnightsFan

Members-

Posts

1,385 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Everything posted by KnightsFan

-

Image thickness / density - help me figure out what it is

KnightsFan replied to kye's topic in Cameras

Quick demo of the effect of water on image thickness. Just two pics from my (low end) phone cropped and scaled for manageable size. These may be the worst pictures in existence, but I think that simply adding water, thereby increasing specularity, contrast, and color saturation makes a drastic increase in thickness. Same settings, taken about 5 seconds apart. -

Image thickness / density - help me figure out what it is

KnightsFan replied to kye's topic in Cameras

A lot of our examples have been soft, vintage, or film. I just watched the 12k sample footage from the other thread and I think that it displays thick colors despite being an ultra sharp digital capture. So I don't think that softening optics or post processing is a necessity. -

Image thickness / density - help me figure out what it is

KnightsFan replied to kye's topic in Cameras

Yes, I think the glow helps a lot to soften those highlights and make them dreamier rather than sharp and pointy and make it more 3D in this instance where the highlights are in the extreme background (like you said, almost like mist between subject and background). I agree, the relation between the subject and the other colors is critical and you can't really change that with different sensors or color correction. That's why I say it's mainly about what's in the scene. Furthermore, if your objects in frame don't have subtle variation you can't really add that in. The soft diffuse light comign from the side in the Grandmaster really allows every texture to have a smooth gradation from light to dark, whereas your subject in the boat is much more evenly lit from left to right. I assume you're also not employing a makeup team? That's really the difference between good and bad skin tones, particularly in getting different people to look good in the same shot. -

Image thickness / density - help me figure out what it is

KnightsFan replied to kye's topic in Cameras

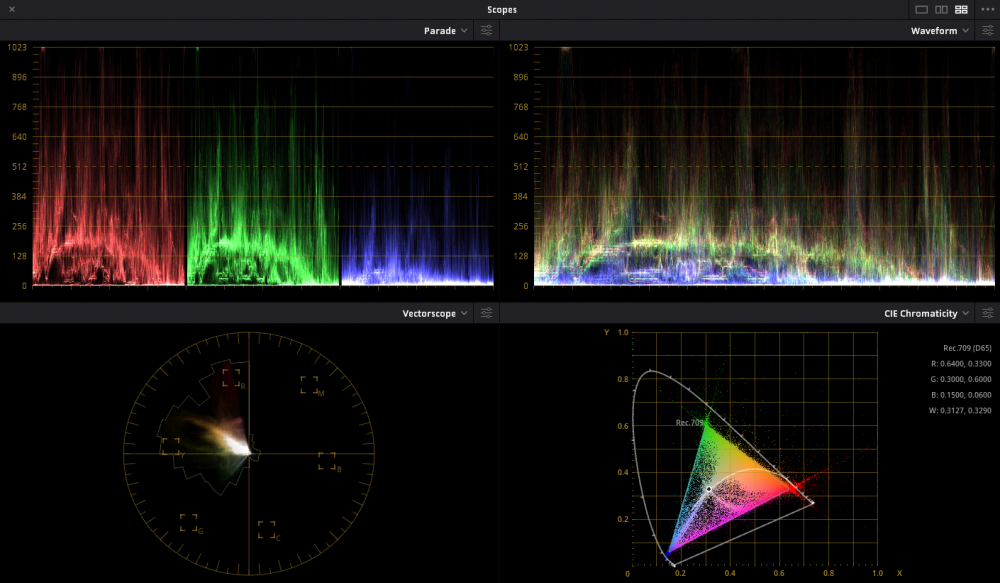

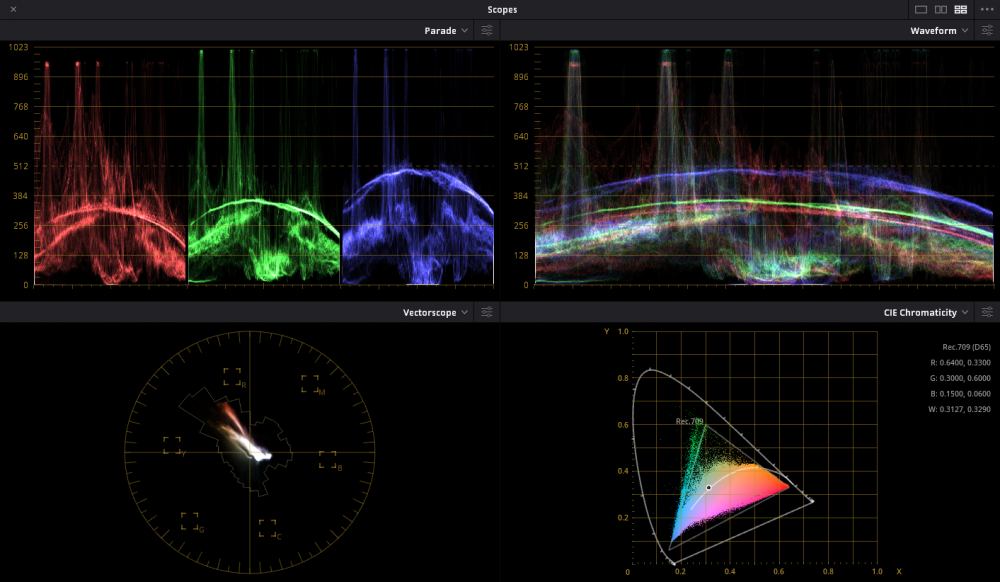

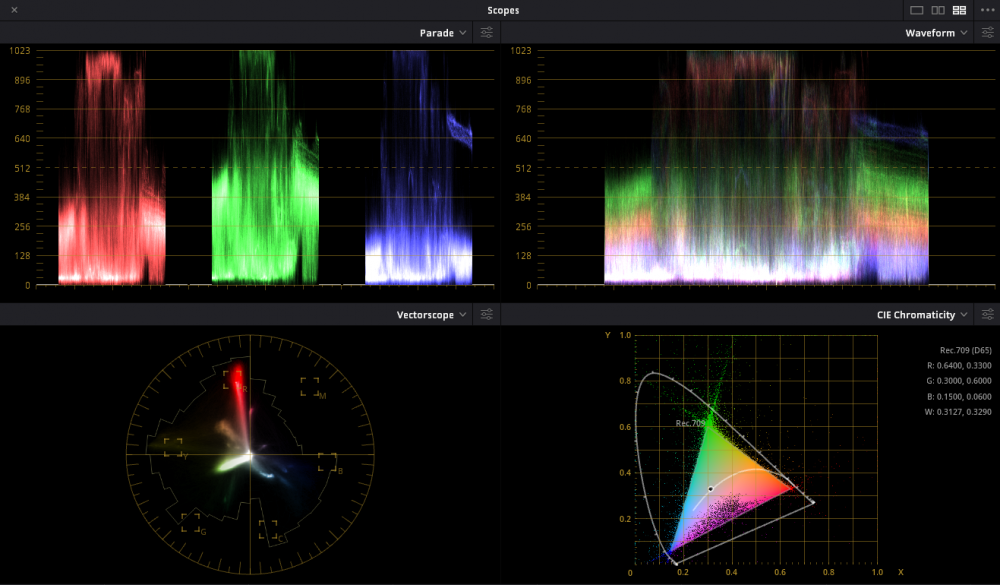

@kyeI don't think those images quite nail it. I gathered a couple pictures that fit thickness in my mind, and in addition to the rich shadows, they all have a real sense of 3D depth due to the lighting and lenses, so I think that is a factor. In the pictures you posted, there are essentially 2 layers, subject and background. Not sure what camera was used, but most digital cameras will struggle in actual low light to make strong colors, or if the camera is designed for low light (e.g., A7s2) then it has weak color filters which makes getting rich saturation essentially impossible. Here's a frame from The Grandmaster which I think hits peak thickness. Dark, rich colors, a couple highlights, real depth with several layers and a nice falloff of focus that makes things a little more dreamy rather than out of focus. And the scopes which clearly show the softness of the tones and how mostly everything falls into shadows. For comparison, here's the scopes from the picture of the man with the orange shirt in the boat which shows definite, harsh transitions everywhere. Perhaps, do you have some examples? For example that bright daylight Kodak test posted earlier here Has this scope (mostly shadow though a little brighter than the Grandmaster show, but fairly smooth transitions). And to be honest, I think the extreme color saturation particularly on bright objects makes it look less thick. -

Image thickness / density - help me figure out what it is

KnightsFan replied to kye's topic in Cameras

@tuppMaybe we're disagreeing on what thickness is, but I'd say about 50% of the ones you linked to are what I think of as thick. The canoe one in particular looked thick, because of the sparse use of highlights and the majority of the frame being rather dark, along with a good amount of saturation. The first link I found to be quite thin, mostly with shots of vast swathes of bright sky with few saturated shadow tones. The kodachrome stills were the same deal. Depending on the content, some were thick and others were thin. If they were all done with the same film stock and process, then that confirms to me that it's mostly what is in the scene that dictates that look. I think that's because they are compressed into 8 bit jpgs, so all the colors are going to be smeared towards their neighbors to make them more easily fit a curve defined by 8 bit data points, not to mention added film grain. But yeah, sort of a moot point. -

Image thickness / density - help me figure out what it is

KnightsFan replied to kye's topic in Cameras

Got some examples? Because I generally don't see those typical home videos as having thick images. They're pretty close, I don't really care if there's dithering or compression adding in-between values. You can clearly see the banding, and my point it that while banding is ugly, it isn't the primary factor in thickness. -

Image thickness / density - help me figure out what it is

KnightsFan replied to kye's topic in Cameras

I've certainly been enjoying this discussion. I think that image "thickness" is 90% what is in frame and how it's lit. I think @hyalinejimis right talking about shadow saturation, because "thick" images are usually ones that have deep, rich shadows with only a few bright spots that serve to accentuate how deep the shadows are, rather than show highlight detail. Images like the ones above of the gas station, and the faces don't feel thick to me, since they have huge swathes of bright areas, whereas the pictures that @mat33 posted on page 2 have that richness. It's not a matter of reducing exposure, it's that the scene has those beautiful dark tonalities and gradations, along with some nice saturation. Some other notes: - My Raw photos tend to end up being processed more linear than Rec709/sRGB, which gives them deeper shadows and thus more thickness. - Hosing down a scene with water will increase contrast and vividness for a thicker look. Might be worth doing some tests on a hot sunny day, before and after hosing it down. - Bit depth comes into play, if only slightly. The images @kyeposted basically had no difference in the highlights, but in the dark areas banding is very apparent. Lower bit depth hurts shadows because so few bits are allocated to those bottom stops. To be clear, I don't think bit depth is the defining feature, nor is compression for that matter. - I don't believe there is any scene where a typical mirrorless camera with a documented color profile will look significantly less thick than an Alexa given a decent colorist--I think it's 90% the scene, and then mostly color grading. -

The Panasonic DC-BGH1 camera soon to be announced

KnightsFan replied to Trankilstef's topic in Cameras

I don't know what all the negativity is about, this looks pretty good to me. Worse specs than a Z Cam E2, but you gain Panasonic Brand (brands aren't my thing but brands are worth real money), SDI, timecode without an annoying adapter, and you can use that XLR module if you want. Plus it takes SD cards instead of CFast. If Z Cam didn't exist I'd get this for sure. -

I don't have super extensive experience with wireless systems. I've used Sennheiser G3's and Sony UWP's on student films, and they've always been perfectly reliable. The most annoying part is that half of film students don't know that you have to gain stage the transmitter... Earlier this year I bought a Deity Connect system, couldn't transmit a signal 2 feet and I RMA'd it as defective. Real shame as they get great reviews and are a great price. What I can say is their build quality, design, and included accessories are phenomenal. I very nearly just bought another set, but my project needs changed and I got a pair of Rode Wireless Go's instead. I've been quite happy with the Go's for the specific use case being in a single studio room. For this project, having 0 wires is very beneficial--we're clipping the transmitters to people and using the builtin mics--so they are great in that sense. If your use case is short range and you aren't worried about missing the locking connector, you can save a lot of money with them. I will say I wouldn't trust them for "normal" films as the non-locking connector is a non-starter no matter the battery life and range. Though I think they will still be useful as plant mics, they are absolutely tiny!

-

Youtube 4K quality is so poor you might as well shoot 1080p

KnightsFan replied to kye's topic in Cameras

I'll amend the statement... "encoders universally do better with more input data." If you keep the output settings the same, every encoder will have better results with a higher fidelity input than a lower fidelity input. Therefore, if YouTube's visual quality drops when uploading a higher quality file, then it is not using the same output settings. -

Youtube 4K quality is so poor you might as well shoot 1080p

KnightsFan replied to kye's topic in Cameras

My second fact wasn't about whatever YouTube is doing, I was just stating that encoders almost universally do better with more input data. So if in YouTube's case the quality is lower from the 4K upload, then they must be encoding differently based on input file--which would not be surprising actually. So I have no idea what YouTube is actually doing, I'm just explaining that it's possible @fuzzynormal does see an improvement. -

Youtube 4K quality is so poor you might as well shoot 1080p

KnightsFan replied to kye's topic in Cameras

I haven't used YouTube in many years, but here are two facts: 1. YouTube re-encodes everything you upload 2. The more data an encoder is given, the better the results While the 1080p bitrate is the same for the viewer whether you uploaded in 4k or HD, it's possible (but not a given) that any extra information that YouTube's encoder is given makes a better 1080p file. Though my intuition is that the margin there is so small, uploading in 4k will not give any perceptible difference for people streaming in 1080p. -

Youtube 4K quality is so poor you might as well shoot 1080p

KnightsFan replied to kye's topic in Cameras

With the handheld footage and moving subjects focus is lost quite a bit. The shot of the orange for example I see no difference between all 3 since the orange is moving in and out of focus. But on that first shot of the plant, which is relatively still, A is the clear winner. B and C are pretty close, but I do think that one shot has C losing ground. I'd suggest shots that are similar to what you actually use since those will be most useful. I'm on a tripod most of the time, which is where you'll see the most difference between 4K and HD especially with IPB compression. -

Z97X-UD5H | 4790K | GTX 1080TI/MINI: add another 1080TI?

KnightsFan replied to Ty Harper's topic in Cameras

If there's no bottleneck, I personally would save my money haha. I think that for normal editing, your 1080TI probably already outperforms your CPU so adding another would likely give no benefit. -

Youtube 4K quality is so poor you might as well shoot 1080p

KnightsFan replied to kye's topic in Cameras

For me A > B > C. C looks the worst to me but mainly because that shot at 1:08 looks digitally sharpened which was visible in a casual viewing. I wouldn't have noticed any other differences without watching closely. I'm not sure how matched your focus was between shots but in the first composition A seems a little clearer than B which is a little clearer than C, could easily be focus being off by just a hair though. -

Z97X-UD5H | 4790K | GTX 1080TI/MINI: add another 1080TI?

KnightsFan replied to Ty Harper's topic in Cameras

If the CPU is bottlenecking, then money you spend on a new GPU will be wasted until the bottleneck is resolved. Same with the SSD--if that's not working at its max currently, then you can spend all the money in the world and it won't help at all. I bring up the CPU because I had a similar CPU and GPU, and upgrading the CPU made a world of difference. If you're set on upgrading your GPU, you might want to look at the upcoming 3000 series cards before buying another 1080. The 3070 was announced at $499 for a release in October with over twice the CUDA cores as the 1080 at a TDP of 220W, which is much less than the TDP of two 1080's. Plus it will work even in software that doesn't specifically use dual GPU's. -

Z97X-UD5H | 4790K | GTX 1080TI/MINI: add another 1080TI?

KnightsFan replied to Ty Harper's topic in Cameras

First if you're editing high bitrate footage from an external HDD make sure that's not the bottleneck. A 7200 RPM drive reads at 120 MB/s, even even two uncompressed 14 bit HD raw streams would go over that. I have a GTX 1080 and use Resolve Studio. Earlier this year I upgraded from an i7 4770 to a Ryzen 3600, and got an enormous performance boost when editing HEVC. So while the decoding is done on the GPU, it's clear that the CPU can bottleneck as well. When I edit 4K H.264 or Raw my 1080 rarely maxes out. Overall I'd be pretty surprised if you need another/a new GPU for basic editing and color grading. Resolve studio is a better investment imo than a second 1080. -

Z97X-UD5H | 4790K | GTX 1080TI/MINI: add another 1080TI?

KnightsFan replied to Ty Harper's topic in Cameras

What type of editing do you do (VFX, color, etc)? Do you currently use Premiere or Resolve Lite? What parts need performance increases? -

Prores vs h264 vs h265 and IPB vs ALL-I... How good are they actually?

KnightsFan replied to kye's topic in Cameras

@kye Have you done any eyeballing as well? Interesting how ffmpeg's ProRes does better than Resolve's. I expected the opposite, because when I did my test, HEVC was superior to ProRes at a fraction of the bitrate, which doesn't seem to be replicated by your measured SSIM. I wondered if my using ffmpeg to encode ProRes was non-optimal. I didn't take SSIM recordings, and I have since deleted the files, but some of the pictures remain in the topic. From your graph, it seems impossible that HEVC at 1/5 the size of ProRes would have more detail, but that was my result. ProRes, however, kept some of the fine color noise in the VERY shadows, far darker than you can see without an insane luma curve. I wonder if that is enough to make the SSIM drop away for HEVC, as it's essentially discarding color information at a certain luminance? (Linking the topic for reference--in this particular test I used a Blackmagic 4.6k raw file as source so you can reproduce it if Blackmagic still has those files on their site) Good call, I've read that MP4 is better than MOV. Not sure if it's true, but worth checking. -

Prores vs h264 vs h265 and IPB vs ALL-I... How good are they actually?

KnightsFan replied to kye's topic in Cameras

I found that you can set the encoding profile to Main10 or Main10 444, which encodes 10 bit in 420 or 444. No 422 afaik. I only tried H.265, and this was on Windows using Resolve studio. -

Prores vs h264 vs h265 and IPB vs ALL-I... How good are they actually?

KnightsFan replied to kye's topic in Cameras

Makes sense. My questions are partly academic, and partly because I'm doing a lot of animation these days so picking codecs from ffmpeg is an actual part of the process haha. -

Prores vs h264 vs h265 and IPB vs ALL-I... How good are they actually?

KnightsFan replied to kye's topic in Cameras

@kye Yes, your H.264 files are 8 bit. So in your initial run, were all your tests generated using either resolve or ffmpeg using your reference file, or were any made directly from the source footage? 1% of the file size is not remotely true, unless you start with a tiny H.265 file and then render it into ProRes which will increase the size without extra quality. I wouldn't trust much that comes from CineMartin. Of course, the content matters a lot for IPB efficiency. So I guess you might be able to engineer a 1% scenario if your video isn't moving, and you use the worst ProRes encoder that you can find. (Stuff like trees moving in the wind is actually pretty far on the "difficult for IPB" spectrum, though it looks like only about half your frame is that tree). Just btw, in my experience Resolve does a lot better with encoding when you use a preset rather than a defined bitrate. Emphasis, a lot better. -

Prores vs h264 vs h265 and IPB vs ALL-I... How good are they actually?

KnightsFan replied to kye's topic in Cameras

I used 4K raw files, and exported them as uncompressed 10 bit 444 HD files and did all my tests in HD, so yes I downscaled. And that's pretty similar to my workflow, where I always work on an HD timeline using the original files with no transcoding. You can run "ffprobe -i videofile.mp4" and it'll tell you the stream format. There will be a line that goes something like: "Duration: 00:04:56.28, start: 0.000000, bitrate: 20264 kb/s Stream #0:0(eng): Video: h264 (High) (avc1 / 0x31637661), yuv420p, 4096x2160 [SAR 1:1 DAR 256:135], 20006 kb/s, 29.97 fps, 29.97 tbr, 30k tbn, 59.94 tbc (default)" So in my example above is 8 bit 4:2:0. 10 bit 4:2:2 would have yuv422p10le -

Prores vs h264 vs h265 and IPB vs ALL-I... How good are they actually?

KnightsFan replied to kye's topic in Cameras

Great tests! One thing I will say is that when I did my ProRes vs H265 tests, I tested on a >HD Raw file in order to maximize the quality of the reference file, to avoid softness and artifacts from debayering. Additionally, my reference file was 4:4:4 rather than 4:2:2... fwiw. The other thing that would be nice is some files to look at, since while SSIM is great to have it's not the only way to look at compression. Also quick question: Are your H.264/H.265 files 4:2:0 or 4:2:2? 10 bit or 8 bit? -

Testing Danne's new EOS-M ML Build (7/29/2020)

KnightsFan replied to SoFloCineFile's topic in Cameras

It looks so much better now, so it was indeed the workflow that was causing issues. There's still some noise in low light, but the mushiness is gone and the color has life in it. Also, I'm not sure if you slowed it down before or had a frame rate mismatch, but that opening shot of the front gate used to have jerky and unnatural movement but looks normal now. One thing though is that you've got some over exposure that wasn't present before. You might want to adjust the curves in Resolve, or experiment with the raw settings. The shot of the bust at 0:44 in the original is properly exposed, but that shots at 0:48 of the bust in the new one is blown out. But yeah, definitely looks like it was captured in Raw now, which the first video did not.