eatstoomuchjam

Members-

Posts

777 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Everything posted by eatstoomuchjam

-

Sold my Sony a1 but regret it... help me pick a new Sony

eatstoomuchjam replied to Andrew Reid's topic in Cameras

The most surprising part? 35 people have viewed that in the last 24 hours. -

What are the current security measures? A blood sample sent to Andrew by mail? A video selfie reciting the forum rules to the tune of Good Ship Lollipop? Signing an affidavit to guarantee that you are not Anish Kapoor nor are you affiliated with Anish Kapoor?

-

No. They either use Ambarella (like every other action camera vendor) or make their own (which might be why they're struggling) GPU, huh? Graphics Processing Unit? You're right. It would be stupid to think that there's anything in a camera that could use a graphics processing unit. Things like web browsing and online money transfer are not done with dedicated hardware - they are done with general purpose CPU cores. In context of a camera, they would run the operating system, display menus, etc. Audio encoder/decoder ("playing music") are, in fact, useful in cameras. Video editing? Do you mean encoders and decoders for popular video formats? Hmm. Sure would be weird to put those in a camera. 1) This is wrong. Learn how things work before talking about them. The amount of heat generated by "moving electrons" isn't a lot - otherwise, power lines and every electrical cord in the house would be too hot to touch. People would need special coolers installed on the interconnects on their computers between the ram and the processor. Every RV with a 30A receptacle would need several inches of asbestos by their house connection. 2) Even if it were true, if all other factors are even, if every other system has been optimized as much as possible for efficiency, reducing the power draw of any one component is a win.

-

Sold my Sony a1 but regret it... help me pick a new Sony

eatstoomuchjam replied to Andrew Reid's topic in Cameras

I don't think I've seen the ZV-E1 mentioned, but I think it falls into that price range (a bit less when used, even!). Otherwise, the A7 IV is notorious for having really good dynamic range. Could be worth a look. -

If the smartphone chips are powerful enough to run their infrastructure products, they should. Without knowing which ones you mean, that would be a difficult statement to evaluate. I suspect, though, that you're just making a statement based on not understanding what you're talking about. Keep that up. Maybe you can become the next president of the US. OK. This is a really dumb argument, sorry. The amount of space is extremely relevant to cooling. How long can you heat up a 100 cubic meter room using a constant 5w draw of electricity? How quickly can you heat up a space the size of a smartphone with the same? I promise you that the second answer is a much smaller number than the first. Beside that, one of the reasons to use a smaller process node is that it uses less electricity to perform the same amount of work. So if all other things are equal, if performing the same amount of work, a smartphone with a 14nm chip would almost certainly overheat before a smartphone with a 3nm chip.

-

The way you speak about "the way the OS works" sounds like you absolutely have no idea what you're talking about. Do you know the first thing about OS design? And so what if a chip is designed to support a heavier OS and it's overkill for some tasks? If it costs less to buy them in bulk vs designing your own weaker chip - and if the camera has better power consumption, etc... great. Also, I'm not sure about the state of the art on Android, but it's usually close enough to Apple - and Apple ProRaw is 12 bit. So phones are capable of handling at least 12-bit images in fractions of a second also. If the chips don't support 14 or 16-bit, that's specifically what I referred to with "If dedicated silicon is needed for some encoder or similar, build it as a coprocessor and connect it to the commodity chip." Current Snapdragon chips built on 4nm cost about $200. This is the very basis of suggesting the use of commodity chips over bespoke or boutique. Do I need to point out to you the massive size difference (and increased cooling challenges) for a device that's about 1/3 the volume of even the smallest dedicated camera and also needs to stick the image sensor just a few mm from a heat-generating screen that's about 2-3x the size of the screen on every dedicated camera except for BlackMagic?

-

When you say compressed raw, you mean raw LT, right? I hadn't heard anything about fully uncompressed raw - just more compressed (lt) and less compressed (st) formats. 😄

-

Exactly. We aren't talking about putting Instagram on cameras. I don't know what OS runs on Canon now, but it used to be DryOS and Sony used to use BusyBox which is a form of embedded Linux (also not sure if that's still the case). They could certainly adapt those systems to run on an off-the-shelf processor. If they do, they would save a bunch of money designing their own chips and most likely end up with cameras that run faster and cooler since modern Snapdragons (as an example) are produced on a 3-5nm or so process. Last I heard, the custom chips that are made for a lot of other cameras are still on older processes - I'm not sure if it's still like 20nm, but even if it's 11-14nm, that was the cutting edge on smartphone chips 8 years ago. Plus, as the PetaPixel article mentions, using the latest Smartphone chips would make it trivial for the vendors to have good support for the latest wifi and bluetooth standards as well.

-

Some of the comments on the PetaPixel story made my head hurt. You're right that a lot of people seemed to think that moving to a smartphone processor would necessarily entail moving to a smartphone OS. I don't actually have any feelings on the OS (though I would find using a standard smartphone UI to navigate my phone annoying so I'd hope they would put their own GUI on top of things). I didn't realize Toneh actually commented in forums before seeing that (partly because I rarely, at best, read comments on anything). Some of the comments in the Reddit thread were interesting, though. I didn't realize that Canon was already using Arm in Digic. If so, it seems like adopting a modern mass market chipset shouldn't be an enormous project (not minimizing, it'd still be a lot of work). It really seems like using a general purpose smartphone chipset and bolting on some asic coprocessor for any of the heavy lifting that doesn't already have silicon in the smartphone chip would work out pretty great. I can say from personal experience that I never saw any signs of overheating when rolling 6kp24 for a long time on a fairly small fanless Z Cam body - not even on hot days. Anyway. Armchair pontification aside, it'll just be a distant hope for the time being. 🙂

-

The closest I know to it is Z Cam. From what I read, their first camera, the E1 came from the founder working either for GoPro or for one of the GoPro clones. He thought about taking an action camera chipset and pairing it with a better sensor and a lens mount - and that's exactly what the E1 is. It has some sort of Ambarella chipset (A9?) (and that's also why some of the problems with it could never be fixed). The E2 line moved away from that, but not too far. Instead of designing their own chip, they still chose an off-the-shelf part from HiSilicon (which ended up causing some drama, as it seemed uncertain that more would get made when the US put restrictions on Huawei stuff). It uses Arm cores and has some dedicated silicon for things like H265 encoding. The Arm bits, at least, are 12nm - and that chip (and the E2 line) came out like 6 or 7 years ago. It's also one of the reasons that Z cam were known for great battery life at release time - the entire package uses like 3w of power. https://www.silicondevice.com/file.upload/images/Gid1549Pdf_Hi3559AV100.pdf Judging by the few released specs of the new flagship V2 series, it seems like they are still using that chipset and still have features comparable to the rest of the cameras being released now. So at least it seems to be possible to build successful cinema cameras using off-the-shelf parts, but you're right that there may be some big barriers for the established companies (though some of those barriers may be in their own heads, believing that they need to design their own)

-

Yes, you seem to be arguing with a point that is the opposite of what I said. I said that camera makers should stop making their own dedicated chips. https://www.qualcomm.com/products/mobile/snapdragon/smartphones/snapdragon-8-series-mobile-platforms/snapdragon-8-gen-3-mobile-platform A google search tells me that this is a chip used in many of the most recent Android smartphones. Some of these phones can be purchased brand new in the US. Looking up some of the phones that use it, some cost about $700 for the entire phone including processor, memory, screen, battery, etc. Some estimates that I saw online say that the chip itself costs about $200 - that's a small fraction of a $5,000 camera. I am suggesting that instead of investing bazillions of dollars to design a chip as powerful as a Smartphone from 5 years ago, camera vendors could go buy an off-the-shelf processor similar to what is used in a modern smartphone. Then they are sharing the bazillions of dollars of R&D expense with OnePlus, Samsung, Xiaomi, and every other integrator who puts that chip in their phone. Why be powered by Digic X when you could be powered by Snapdragon 8 Gen 3? If dedicated silicon is needed for some encoder or similar, build it as a coprocessor and connect it to the commodity chip.

-

Sure, $30k seems a lot for a camera, but if I don't spend that, where am I going to get content for my 17K TV? 🙄

-

Metabones Canon EF to Sony E CINE eND Smart Adapter

eatstoomuchjam replied to BTM_Pix's topic in Cameras

There's no doubt it's very useful for a subset of users! I'm only suggesting that they are getting to market behind a lot of others who have some form of swappable ND in the mount and I'm not sure that (aside from you) how many people are apt to run out and buy yet another one when they already have one. 🙂 -

Metabones Canon EF to Sony E CINE eND Smart Adapter

eatstoomuchjam replied to BTM_Pix's topic in Cameras

Nice! Though I thought at first it was a speed booster with eND and was like "Make an RF version and take my money already." Inline ND filter is still cool, but they're a little bit late to a crowded market. -

The Z-Cam E2-F6 Pro uses a single for power/signal/control to its 5" monitor IIRC. Also, I think Arri does it, but that's a bit outside of my price range.

-

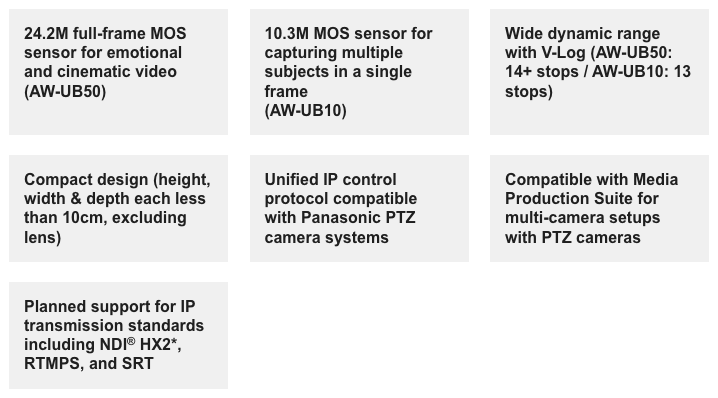

The marketing materials seemed to be making a big deal of their IP connectivity and support for various PTZ protocols. 3 of 7 boxes from Panasonic's page relate to streaming/PTZ/multi-camera. It could be that they're making a play for the church streaming market (which seems weirdly big) as well as things like podcast studios, etc. Overall, it's a ho-hum announcement. Unless the camera is really cheap, I can't see it making much of a splash.

-

Sure - though to be clear, no current Red (that I'm aware of) ships with the DSMC3 Red Touch screen. It's a $3,000 add-on - between a 50% and 150% price premium over the SmallHD screen that they modify (depending on which model of SmallHD). In this regard, the Pyxis monitor is a LOT better. Even without supporting the buttons, if any other cameras support the image/power over USB-C, I'll almost certainly be a customer for it.

-

Whoa, whoa. Lighten up, Francis. If you don't like the stuff, don't engage. There's no need to call other members dumb or untalented.

-

RED doesn't make first-party monitors. They add some capabilities to SmallHD monitors and add the single connection option. As an owner/user of a Komodo-X, I'd say having a screen on the side for settings is really nice (vs fixed top screen). It just seems silly to me that they made a settings/focus puller screen so big.

-

(Or lenses that can be adapted to EF - including Leica R, Olympus OM, and Nikon F)

-

You're making a bunch of incorrect assumptions. If there is a difference in "optical quality" between F and Z mount lenses at medium focal lengths, it likely has more to do with a combination of modern lens design and correcting certain types of lens defect in software which means fewer compromises in the physical design of the lens to mitigate those problems. Anyway, assuming that by "optical quality," you mean "sharpness," it's a horribly incorrect assumption that it's what people shooting cinema want. K35's are far from tack sharp. Vintage Zeiss Superspeed lenses aren't all that sharp. Vintage Cookes are FAR from sharp. Modern EF glass was already a lot better in many ways than them - but yet, people love the older lenses and how they looked. Go watch even the trailers for The Witch or the Lighthouse. The latter went even beyond the "modern" lenses that I listed before and was shot, at least partly, on Baltars. Not Super Baltars. Baltars. Anyway, even if you're talking about autofocus stuff, again, newer EF glass is going to be good enough for most things and on RF cameras (and I'm told on Z cameras, with the right adapter) autofocuses like a native lens. The focus motors on older glass weren't as good, but the newer stuff can focus almost as fast as any native lens that I have for RF mount - and certainly fast enough for any time I've needed/wanted it on a short. My personal strategy is to focus on EF lenses for anything needing modern sharp glass. I've definitely not had any complaints from anybody that I've worked with that the lenses weren't up to their expectations - whether using the L mount variant or the CN-E variant. It's great - I have no stress about changing between any modern camera systems.

-

Huh? EF lenses work perfectly with an adapter on RF bodies. Just about anybody buying $4,000 cinema lenses will be savvy enough to understand that. Especially at the higher end (v-raptor, etc), the number of people using native RF lenses on their Red is a lot less than you seem to be suggesting. The cost of a new PL to Z mount adapter ($300ish for a fairly high-end model) is pretty negligible compared with the price of a shiny new v-raptor ($20k+). The users of the $6k-10k cameras might be more apt to jump ship - then it's more of a question of whether Nikon care about ceding some portion of that market to Canon/Sony.

-

This sort of thing used to come up a lot in the Z Cam user groups. It's not necessarily that most clients on a paid shoot really mind, but there is sometimes an uphill battle in needing to educate the customer. If you tell the customer "I'm showing up with a Red" or "I'll be there with my FX6" - or insert any boxy prosumer camera in here, there's no education or discussion needed. Even though the Netflix approved camera list is truly only relevant to people who are shooting a made-for-Netflix production, it could also be a useful tool - "I'll be showing up at your shoot with this camera which, as you can see, is used to make productions for Netflix." Even for non-paid cinema work that I do, people get a lot more excited if I show up with the Komodo-X than they do if I show up with the C70 or Z-Cam or GFX 100 II. There's no need for them to be - every one of those cameras can make a really nice image.

-

I've always assumed that the various "rumors" sites were fed largely by the execs at those companies who want to do "soft announcements" of their products to watch for reactions and to drive up engagement/interest/curiosity. That way they are never actually on the hook for things. Looks like the new camera will support 8kp160? Leak it to the rumor site. When it actually supports 8kp24? "Hey, hey, we never said it would. That was just a rumor."

-

When I saw yesterday that you will now be able do do 4k120p ProRes on a phone, I imagined the shame being felt by any number of camera companies who claim they can't do it on their dedicated cameras. Not sure yet how long the iPhone can roll 4k120 without overheating, but Canon should be making a face like the meme awkward puppet monkey right about now... It's increasingly obvious that camera vendors should stop trying to build their own special magic chips (Bionz! Digic! X-Processor Pro!) and figure out how to adopt current-generation smartphone processors. Why build a dedicated chip on a 14nm process when you could build your system on a much more powerful chip that was built with 2nm that uses less power and runs so much cooler? A lot of vendors should feel relief that the companies who have (so far) tried to blend phone and camera have done such a tremendously terrible job of integration. Eventually somebody's gonna get it right.